Building a secure, fast and fun authentication experience

A quick look at our infrastructure

The title revealed it already: We use Kubernetes. Our systems were built on containers for a long time and around December 2016 we migrated our custom Ansible/Docker-Setup setup to Kubernetes (we started with v1.3).

We are running clusters in Amazon EC2 and bare metal machines. The clusters are partitioned with namespaces to grant academies full autonomy on their infrastructure. Future posts will discuss our infrastructure in greater detail.

. . .

There’s (at least) one thing that all Ginis have in common: A Google account.

Many of the tools and services we use in our day-to-day life allow us to login with Google and we absolutely 💙 that. We are happy G-Suite users since day one and try to use Google logins wherever possible.

It simplifies onboarding and deboarding, enforces 2 factor logins and reduces the amount of passwords and accounts we need to remember and manage.

A blind spot in our infrastructure

Up until recently there was a big exception to this rule: Kubernetes. When we deployed Kubernetes initially we took the easy route and started with a static password file. This worked fine for the small number of academy users in our clusters but this simplicity came at a cost:

- Users, groups and passwords are managed separately

- Every modification to the password file requires a restart of all API servers

- Passwords are created by a central entity and distributed to the user

- Permission and group membership updates require changes to the password file and RBAC definitions in many scenarios

- The password file was managed in a Git repository and a few users were able to see all tokens. This made RBAC less effective and left potential for impersonation.

Evaluating alternatives

Kubernetes offers a wide range of authentication options but for our needs only a few qualified for evaluation:

- x509 client certificates

- OpenID Connect tokens

- Sticking with static password files

x509 certificates

At a first glance x509 certificates looked like the perfect solution as we already operate an internal PKI (Public Key Infrastructure). A later post will cover our usage of vault in this regard. We use TLS certificates for a wide range of use-cases and we have experience and proper tooling in place. Benefits all the way down:

- Kubernetes group management with OUs (Organizational Units)

- Well understood and battle tested technology

- PKI infrastructure already in place

- It’s even possible to manage certificates (only for kubelets though)

No more time to waste! Let’s do this, … right?

There’s a catch

Moving to x509 certificates would solve a number of shortcomings of our current approach but also introduce a major security concern:

Kubernetes is currently not able to verify the status of a provided client certificate. Neither CRL (Certificate Revocation Lists) nor OCSP (Online Certificate Status Protocol) are implemented.

This means that a certificate cannot be revoked and this is a dealbreaker for us. 😱

Photo by Todd Cravens on Unsplash

We need a way to block a lost or exposed certificate and ensure that the currently granted permissions are respected. There are ways to mitigate parts of it (short-lived certificates, skipping groups in RBAC and stick with CN) but it just didn’t feel right. What a bummer.

OpenID Connect

When I first looked at OIDC some time ago it didn’t really click and today I’m wondering why. This may remain a mystery…

It was this blog post by Joel Speed that brought my attention back to OIDC and I shared it immediately with a few of my faculty members.

The next day we teamed up and deployed dex, an OIDC identity provider from the good folks at CoreOS, to our internal Kubernetes cluster. We were able to get the proof-of-concept working in < 1 hour and were very pleased with the results.

We configured dex to use Google as backing service and requested and renewed tokens with the included example application. After altering the Kubernetes API server configuration we were able to use kubectl with our Google approved JWT (JSON Web Token). We left the office with a big grin on our faces.

Photo by Jonathan Daniels on Unsplash

Where’s the catch?

OIDC is not perfect and here are some issues we identified:

- Access can be revoked centrally but is not immediately respected by the Kubernetes API. The id-token has a TTL (Time To Live) and as long as it is not expired you can still use it. After the expiration (max. 24h) the login is blocked.

- You cannot use groups. Google simply doesn’t offer it. You need to use individual email addresses in your RBAC definitions.

- Dependency on an external entity. It’s true that a downtime of Google’s OIDC servers could impact our ability to manage the cluster. We see it as a minor issue because we can always deploy a static password file to gain access without impacting anything that runs in the cluster.

Let’s not forget the benefits

- Fewer accounts, group memberships and passwords to manage

- 2nd factor (2FA) automatically enforced

- Impersonation impossible (or very very unlikely)

- No dependency on the Ops faculty to manage secrets or accounts: self-service

From Proof-of-concept to production

We allocated a few hours the next day to continue the investigation and to find the perfect workflow for all Gini Kubernetes users.

The first question we wanted to answer was if the extra layer introduced by dex provided enough benefits as we were only interested in Google’s OIDC implementation in the foreseeable future. We came to the realisation that dex is not needed for our current state and dropped it.

Next item on our list was the flow a user had to go through to get the tokens into the kubectl configuration.

To our surprise it proved rather tedious to get an id- and refresh-token into the config. We discovered Micah Hausler’s k8s-oidc-helper and it did a great job automating parts of the flow. Copying authCodes from the browser into the terminal didn’t feel completely frictionless to us though.

We 💙 automation and the whole process should require as little interaction as possible. We started to think about a custom solution and I’m very happy to share the results of these efforts with you: dexter.

The name was inspired and derived from dex and although dex was no longer part of our stack we decided to stick with the name. The git repo was already created, the walls were filled with flow diagrams and ideas, … it was too late for a new name.

How is dexter different?

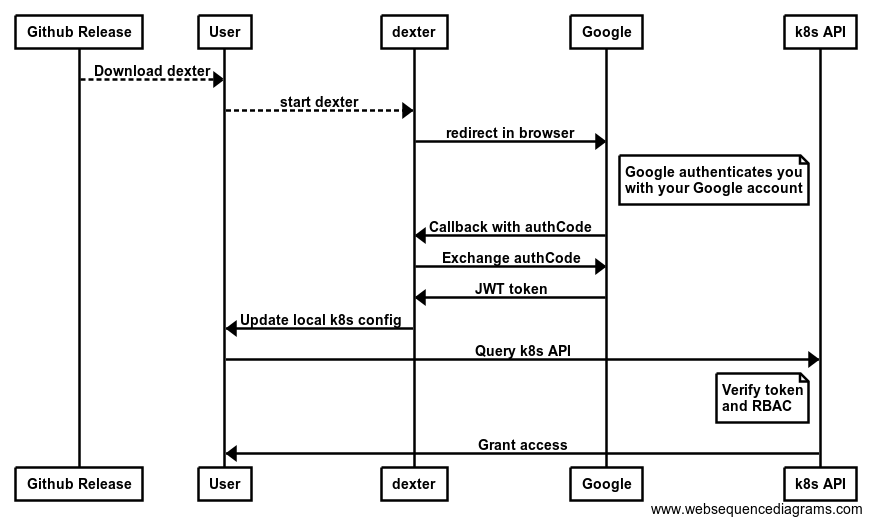

We wanted to limit the user interaction to the start of dexter and the login to Google. No further user interaction should be required. dexter accomplishes this goal by starting a HTTP server on localhost that receives the Google callback of the OAuth2 web app flow. This allows us to fetch the tokens and update the kubectl config without additional user interaction. Here’s the full flow:

The full OpenID Connect flow

A gif is worth a thousand words.

dexter automatically updates your ~/.kube/config . Here is a snippet of the created kubectl configuration:

kubectl will automatically use the refresh-token to update the id-token when it expires. Again no additional user interaction required.

We updated our RBAC configurations to be based on the user’s email addresses.

Your API server will need these additional flags to verify Google OIDC tokens:

Sticking with static password files

Given the advantages of OIDC and dexter it was an easy decision to leave our static passwords files behind. One less thing to worry about.

Conclusion

We are very happy with our OIDC setup and the feedback from internal users at Gini has been very positive. We would love to hear your feedback on dexter:

- Donwload the prebuilt version or built it yourself

- Open a GitHub issue with all the bugs you find

- Help us improve dexter and send pull requests

- https://github.com/gini/dexter

. . .

If you read this far, you should probably check out our open positions. We are looking for excellent tech people to join us.

At Gini, we want our posts, articles, guides, white papers and press releases to reach everyone. Therefore, we emphasize that both female, male, and other gender identities are explicitly addressed in them. All references to persons refer to all genders, even when the generic masculine is used in content.